In the recent Washington Post article How the Obama Campaign Won the Race for Voter Data, Joel Kowsky writes about how the 2012 Obama campaign used analytics to improve their campaign strategy, and to ultimately secure the presidential victory.

Regardless of where you stand on the political spectrum, it’s hard to argue that Barack Obama’s campaign strategy was anything short of impressive. As soon as Obama took office in 2009, his team began preparing for his 2012 campaign. From the start there was a strong emphasis on measuring the campaign’s progress. Jim Messina, Obama’s 2012 campaign manager, stated

“There’s always been two campaigns since the Internet was invented, the campaign online and the campaign on the doors. What I wanted was, I didn’t care where you organized, what time you organized, how you organized, as long as I could track it, I can measure it, and I can encourage you to do more of it.”

The team began by conducting a postmortem study on their 2008 campaign where they analyzed the number of homes visited, phone calls placed, and voters registered by each field organizer and volunteer. The result was a 500 page report which highlighted areas of improvement for the 2012 campaign.

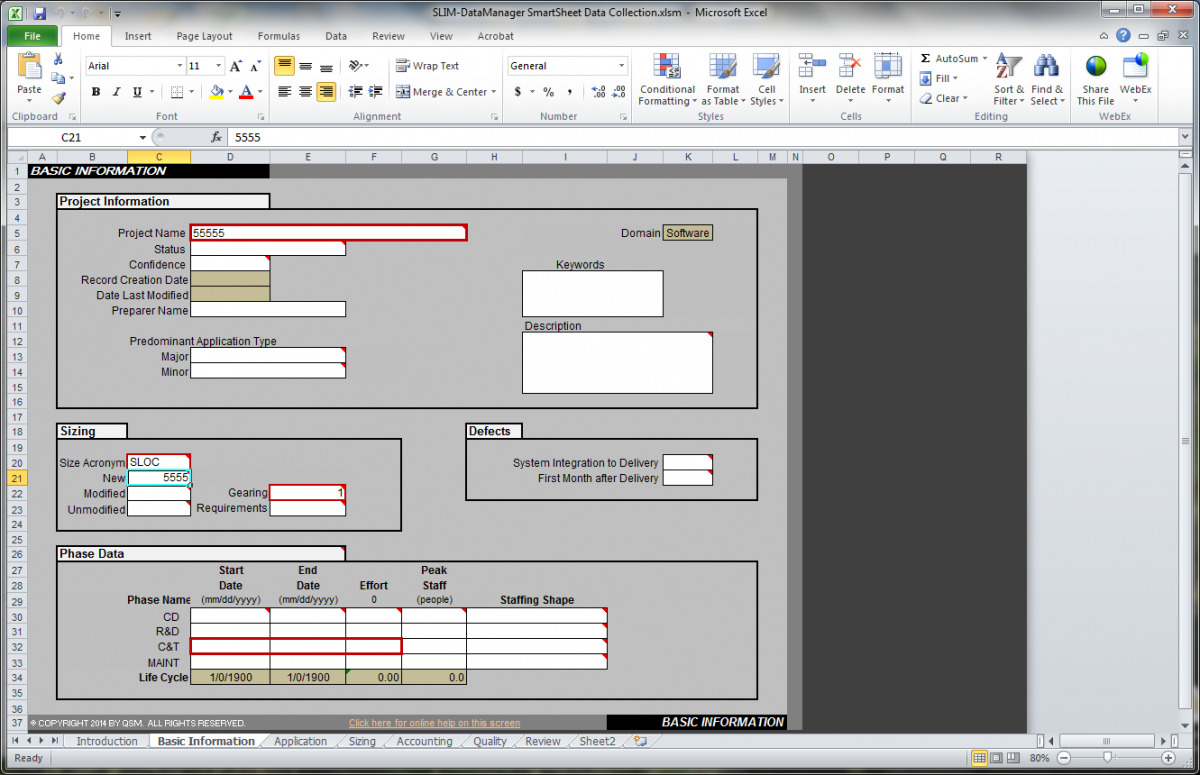

The suggestions led the Obama campaign to invest in building customized software that would integrate all the data the campaign had collected on voters, donors, and volunteers and link to individual voter profile. This software analyzed previously collected data to calculate the likelihood of candidate support, the likelihood of election day turnout, and the degree of persuasion for each voter.